- Humans + AI with Ross Dawson

- Posts

- Humans + AI in banking, decision architectures, Enterprise AI Playbook, and more

Humans + AI in banking, decision architectures, Enterprise AI Playbook, and more

This week I am in Bali and Kathmandu giving keynotes sharing a macro view of the future of banking (and of course the opportunities for banks and society), substantially based on Humans + AI themes, with another keynote early next week in Sydney specifically on 'Humans + AI in the future of banking'.

I am also today finishing my chapter on Humans + AI in banking as my contribution to Brett King's forthcoming book 'Bank 5.0'. I work in many industries, but the impact of Humans + AI in banking and financial services is particularly pointed, so good to be getting these ideas out there.

The governance and compliance issues come to the fore in finance, with many lessons for other industries. I'll share some more specific thoughts on this soon.

Have a great week! Ross

💡Signal of the week

I've been diving deep into Humans + AI Decision Making Architectures, working on what will likely become an extensive enterprise guide and report.

One critical piece that has come up in defining architectures is my belief that 'final human approval' as the 'human-in-the-loop' structure is deeply flawed. Human as checkpoint is not their best value.

This came into focus with a recent MIT paper "Chaining Tasks, Redefining Work: A Theory of AI Automation,” which redesigns workflows on the (simply stated) assumption that we can chain tasks before human approval rather than approval at each step. We need a lot better Humans + AI workflow design than that.

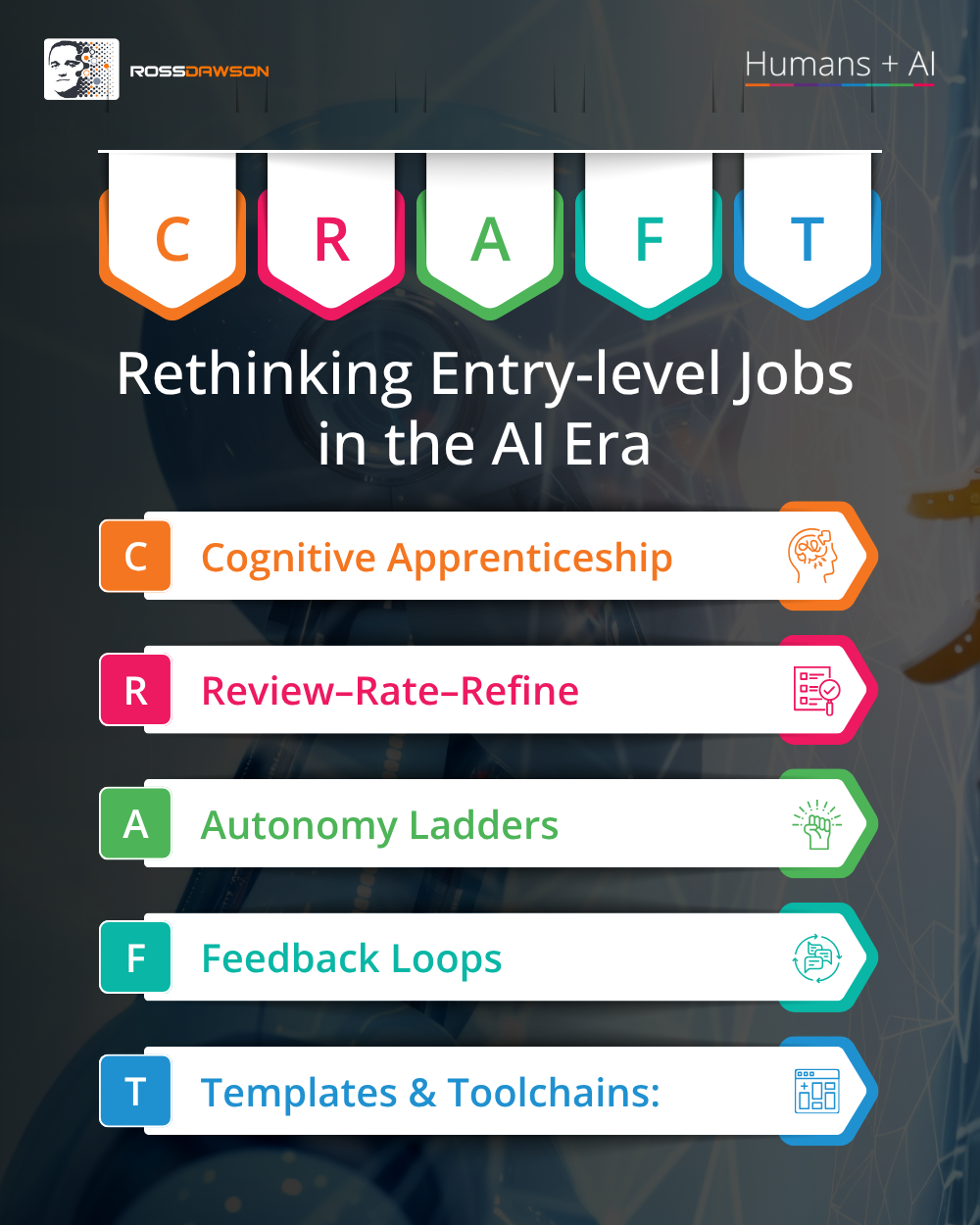

🖼️Framework: CRAFT: Rethinking Entry-Level Jobs in the AI Era

Entry-level roles are under real pressure. Dario Amodei warns AI could eliminate half of them within five years, and early Stanford research suggests that shift is already underway, making this an urgent conversation for every team.

The CRAFT framework helps leaders and educators redesign entry-level positions for an AI-integrated workplace, rather than simply watching them disappear. Use it when onboarding a new hire or auditing junior roles to ensure they build genuine human skills alongside AI tools.

⚡This week’s signals

Walmart is training its entire two-million-person workforce on agentic AI tools, framing the initiative around improving customer experience rather than cutting headcount. The retailer is treating AI fluency not as a specialist skill but as a baseline capability expected of every employee.

The largest private employer in the United States is making Humans + AI capability a workforce-wide foundation — redefining what AI literacy at scale actually looks like in practice.

Automation-first AI strategies can deliver early efficiency gains, but augmentation-led approaches tend to outperform over time because sustained performance depends on how people feel about their work and whether top talent stays. Ignoring employee perception of AI quietly compounds attrition risk until it shows up in results.

The augmentation-versus-automation choice is not a slogan — it is a measurable bet on whether your best people stay long enough for AI to compound their value.

A study tracking industries with high AI exposure from 2017 to 2024 found 10% productivity gains alongside nearly 4% job growth and 5% wage growth — but only in sectors where AI was designed to complement human work rather than operate autonomously. Where AI ran more independently, productivity improved without the accompanying human dividend.

Real-world employment and wage data from sectors already deep into AI adoption make the design principle clear: complementarity between humans and AI generates shared gains, while autonomy concentrates them.

📊AI in Enterprise Report

The Enterprise AI Playbook: Lessons from 51 Successful Deployments

Stanford's analysis of 51 real enterprise AI deployments cuts through theory to reveal what actually separates high-performing implementations from expensive failures.

• Organisational challenges — change management, data quality, process redesign — account for 77% of the hardest deployment problems, not technical ones.

• AI systems handling 80%+ of workload autonomously deliver 71% median productivity gains, more than double the 30% seen in full-human-approval models.

• 61% of successful deployments were preceded by at least one failed attempt, making early failure a predictor of eventual success.

Organisations must invest as heavily in redesigning processes and culture as in the AI itself — and treat first failures as tuition, not termination events.

🔬Latest Humans + AI Research

Economics of Human and AI Collaboration: When is Partial Automation More Attractive than Full Automation?

This model proves partial human-AI collaboration is often the economically optimal end-state, not just a stepping stone to full automation.

• AI accuracy costs rise sharply as you push toward full automation, making a human-AI blend cheaper than either extreme for most task portfolios.

• Low-complexity tasks are strong candidates for high AI substitution, but high-complexity tasks reach equilibrium with only limited AI involvement—meaning your automation strategy should vary by task type, not be uniform.

• Human-AI collaboration isn't a temporary compromise while AI matures—it's a stable economic equilibrium that will persist even as AI improves.

How do these equilibria shift as AI accuracy curves flatten with next-generation models, and does that tip more high-complexity tasks toward fuller automation?

🌐From Humans + AI Community

A response from former Singularity University faculty Paul Epping on recent "solutionist" writings from Peter Diamandis on the age of abundance, and why we need a survival guide if it will be abundant...

🎧Humans + AI Podcast

Michael Gebert on designing freedom, human self-determination, cognitive sovereignty, and systems of agency (AC Ep40)

Listen nowWhy you should listen

What does it actually mean to remain the author of your own decisions in a world increasingly shaped by algorithmic nudges and AI-generated recommendations? This episode digs into the tension between convenience and autonomy, exploring how the systems we build — and the ones built around us — quietly redistribute agency in ways we rarely notice. Gebert's framing of "cognitive sovereignty" reframes AI not as a tool but as a design challenge with profound stakes for human identity. After listening, you'll start asking who — or what — is really making your choices.

AI-powered strategy intelligence for boards and executive teams — apply for Fraxios beta

Most organizations have a strategy. Almost none have a strategy process that stays current as conditions change. Fraxios is a new platform that structures your strategy as a living architecture across every dimension of your organization, so your leadership team can explore options, surface tensions, and evolve your thinking continuously rather than waiting for the next planning cycle. Now in private beta, working with a small number of organizations on real strategic challenges.

Thanks for reading!

Ross Dawson and team