- Humans + AI with Ross Dawson

- Posts

- Human-AI collaboration, thinking with machines, cognitive impact, decision architectures, and more

Human-AI collaboration, thinking with machines, cognitive impact, decision architectures, and more

“Intelligence is the ability to adapt to change.” — Stephen Hawking

Keynotes on human-AI collaboration

This week I gave a keynote at Asana’s Work Innovation Tour where they announced their new AI Teammates feature, bringing AI agents alongside humans in workflows.

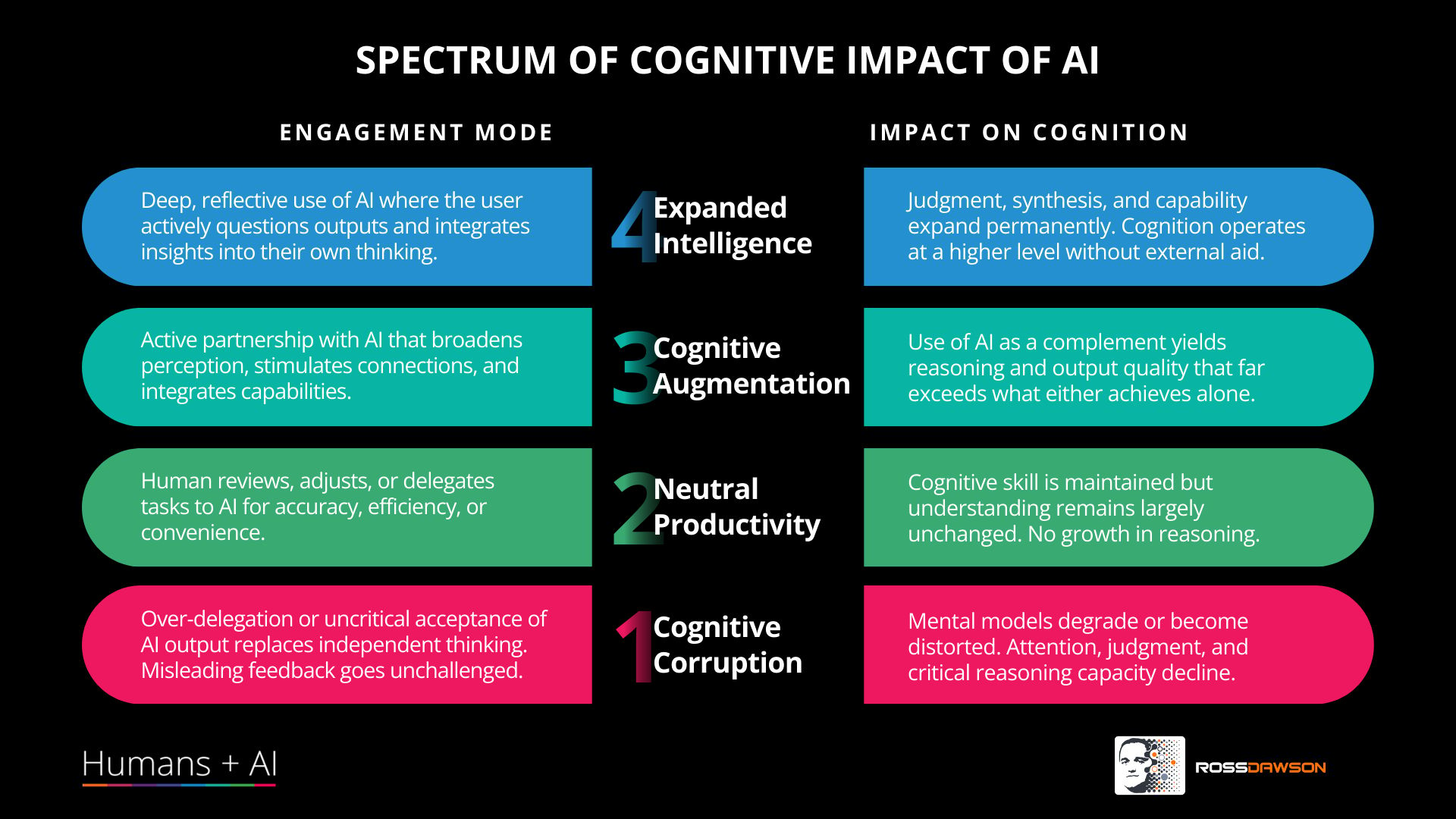

One of the frameworks I shared that resonated the most was my levels of cognitive impact of AI, which you can see below, pointing to the positive potential of AI as well as the real negative impact that many are experiencing. I was delighted with the feedback I received after from attendees, with a number saying they have never before had such a positive view of the positive potential of AI.

I have quite a few more interesting keynotes coming up and I’ve received several more enquiries stemming from the Asana gig.

As noted last week a new newsletter format is under way, looking good for the improved version to come next week, stay tuned!

Have a great week,

Ross

📖In this issue

Framework: Four Levels of Cognitive Impact of AI

Reseach Paper: Building Machines that Learn and Think with People

Humans + AI Podcast: Joanna Michalska on AI governance, decision architectures, accountability pathways, and neuroscience in organizational transformation

💡Framework: Four Levels of Cognitive Impact of AI

You may recall that I created an 8 level Spectrum of Cognitive Impact of AI a little while back. As a frequent keynote speaker I need to communicate key ideas in compact formats that can have a real impact. For keynotes I use this simplified 4 level framework, drawing out the most important distinctions - negative, neutral, positive with AI use, and positive after (but not with) AI use.

💡Research paper of the week:

Building Machines that Learn and Think with People

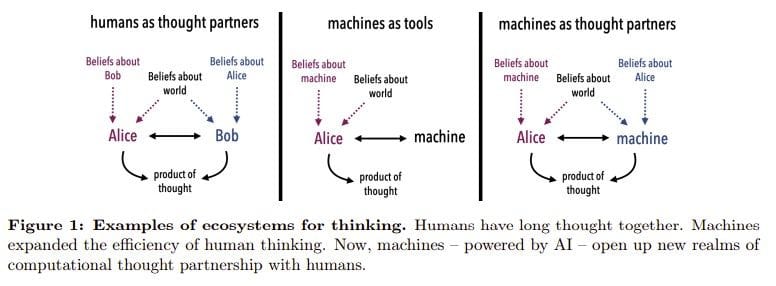

“What do we want from machine intelligence? We envision machines that are not just tools for thought, but partners in thought: reasonable, insightful, knowledgeable, reliable,and trustworthy systems that think with us.”

Key takeaways from the paper include:

🧭 Start by choosing the collaboration mode.

The paper says human-AI thought partnership is not one thing: it breaks it into 5 modes—planning, learning, deliberation, sensemaking, and creation/ideation. Each mode has different practical challenges, from goal inference and curriculum pacing to consensus formation, explanation, and brainstorming.

🧠 Use the paper’s three-part test.

A real thought partner should do 3 things: understand the user, be understandable to the user, and share a reality-grounded model of the world or task. The paper presents these as its central design criteria, not as nice-to-have extras.

👤 Model the person, not just the prompt.

The authors argue that systems should explicitly model the human collaborator as an agent with beliefs, goals, knowledge, and resource limits. In the paper’s examples, WatChat infers a programmer’s mistaken mental model, while CLIPS infers shared goals from both language and actions.

🛠️ Don’t expect LLM scale alone to get you there.

The paper says scaling foundation models with feedback and web traces can produce fluent systems that mimic human behavior, but not ones that robustly reason about the world or other minds. Its proposed alternative is to combine foundation models with structured models, probabilistic programming, and goal-directed search.

🚦 Design the workflow around the AI, not just the AI itself.

The paper says infrastructure matters: when the AI should be used, whether the human or machine should start, and how reliance should be communicated are all part of the design. It also flags 3 main risks—reliance and access, anthropomorphization, and misalignment—and says evaluation should include real interaction, not just static outputs.

🎙️This week’s podcast episode

Joanna Michalska on AI governance, decision architectures, accountability pathways, and neuroscience in organizational transformation |

Why you should listen

Joanna Michalska has worked extensively in the realities of implementing AI governance, especially in decision-making, and brings powerful insights into how we can set guardrails to unleash value creation in organizations.

Thanks for reading!

Ross Dawson and team