- Humans + AI with Ross Dawson

- Posts

- Human agency, bot battles, rise of the human-AI workforce, GenAI upskilling and more

Human agency, bot battles, rise of the human-AI workforce, GenAI upskilling and more

Earlier this week I completed three keynotes in seven days across three countries, all on Humans + AI in the future of banking.

Financial services is among the most intensely regulated industries, leading to strong focus on governance of decisions across all layers of the organization. This is useful, as the distinctions in decision transparency, oversight, accountability, and quality can be applied across the board.

I'll shortly be sharing some of my work on Humans + AI decision-making in a format ready for implementation.

Have a great week! Ross

💡Signal of the week

There are numerous signs of that AI acceleration being real. One prominent one is Mozilla's report on using Claude Mythos, identifying around 10x the vulnerabilities in any previous month.

Mythos is not hype. The race to establish cyverdefences before this class of model becomes more generally available must be intense.

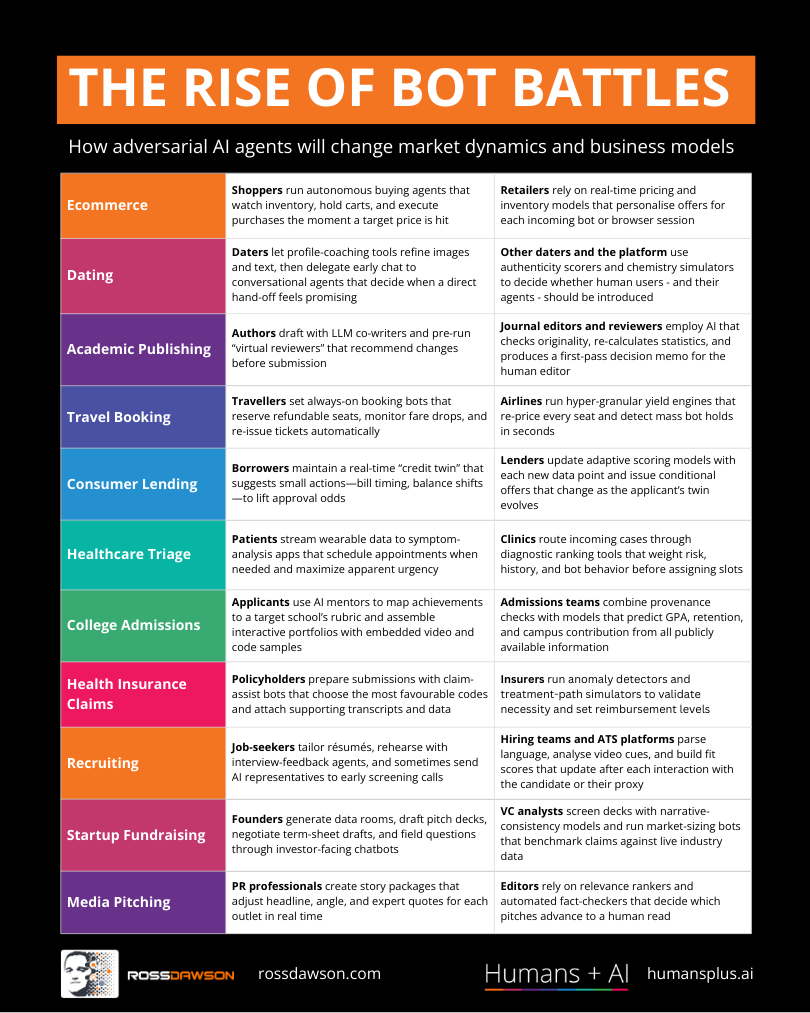

🖼️Framework: The Rise of Bot Battles: 11 Domains of Adversarial AI Agents

As AI agents increasingly act on behalf of buyers, sellers, employers, negotiators, and more, understanding where these automated systems will clash — and how — becomes a critical strategic advantage.

The Rise of Bot Battles maps out 11 domains where adversarial AI interactions are already emerging or soon will be, from hiring and pricing to fraud detection and content moderation, helping you anticipate how your own market might shift when both sides of a transaction deploy intelligent agents.

⚡This week’s signals

Anthropic has released ten ready-to-run agent templates covering the most time-consuming work in financial services, including pitchbooks, KYC screening, month-end close, valuation review, each bundled with skills, connectors, and subagents and delivered as plugins inside Excel, PowerPoint, Word, and Outlook. Pre-built integrations with data providers including FactSet, Moody's, and Dun & Bradstreet mean the path from pilot to production is now measured in days rather than months.

Signals that the unit of AI work is shifting from the prompt to the template — and that whoever controls the reference architecture controls how an entire profession gets rewired.

Google DeepMind has proposed a 'triadic care' model in which AI agents work under physician authority alongside doctor and patient, backed by a randomised study of 120 simulated telemedical encounters and a primary-care benchmarking suite. The system matched or exceeded primary care physicians across the majority of evaluated dimensions, though it trailed on detecting red flags and guiding critical physical examinations.

Signals that the credible frame for medical AI is now triadic — patient, physician, agent — and that the open design question is not raw capability but precisely where physician oversight remains structurally necessary as routine tasks get absorbed.

A new McKinsey Global Institute report finds AI could automate more than half of US working hours today across most professions, yet roughly half of work remains beyond current technology — with the key organisational unlock being speed as a deliberate strategy rather than waiting for certainty before acting at scale. The accompanying argument is that scattered pilot experiments must give way to a leadership-aligned value thesis prosecuted across functions simultaneously.

Signals that the productive frame is no longer 'augment versus replace' but 'super skill' — humans staying valuable not by holding the same baseline but by using AI to lift their performance faster than the agent layer commoditises it.

Wharton researchers documenting 'cognitive surrender' across three experiments with 1,372 participants found that when an AI gave a wrong answer, users' accuracy fell 15 points below the no-AI baseline — while their confidence simultaneously rose. Crucially, accuracy incentives and immediate feedback substantially reduced the effect, pointing to organisational design rather than better models as the primary remedy.

Signals that the deeper risk of AI in knowledge work is not error in the model but the suppression of the human 'something's off' signal — and that protecting judgment is an organisational design problem, not a technology one.

📊AI in Enterprise Report

Agents, human agency, and the opportunity for every organization (2026 Work Trend Index Annual Report)

With AI adoption plateauing for many organisations, Microsoft's largest Work Trend Index to date isolates why individual AI skill alone isn't translating into business results.

• Organisational factors — culture, manager support, talent practices — drive more than twice the AI performance impact of individual mindset.

• Only 19% of knowledge workers occupy the 'Frontier' zone where personal AI capability and organisational readiness mutually reinforce each other.

• One in ten AI-fluent workers are actively blocked — their skills outpace what their organisations can absorb or act on.

Investing in AI training without redesigning management structures, workflows, and culture leaves most of that investment stranded in individuals who cannot convert capability into outcomes.

🔬Latest Humans + AI Research

Upskilling with Generative AI: Practices and Challenges for Freelance Knowledge Workers

Freelancers are your canary in the coalmine for how AI reshapes skill acquisition across all knowledge work.

• Workers use gen AI to scaffold exploratory learning—breaking down unfamiliar domains quickly—but distrust it enough to keep human sources as their validation layer.

• The learning mindset is shifting from building durable expertise to achieving immediate market viability, a 'survival mode' that may hollow out deeper capability over time.

• AI-assisted upskilling creates an 'invisible competency' problem: workers gain real skills they cannot credibly signal or verify to clients and employers.

How can credentialing systems evolve fast enough to make AI-assisted learning legible—and trustworthy—in competitive talent markets?

🌐From Humans + AI Community

A rich discussion spawned by Kanella Salapatas asking "What actually needs to be true for a Human‑in‑the‑Loop control to be genuinely effective?"

This was also a core topic of discussion in the Campfire event this week, with the summary surfacing some deep insights. We will be running an event on this in the community soon.

🎧Humans + AI Podcast

David Vivancos on the end of knowledge, cognitive flourishing, resilient societies, and artificial democracy (AC Ep42)

Listen nowWhy you should listen

What happens to human identity when AI can outperform us on nearly every cognitive task we've traditionally valued?

This conversation digs into that unsettling question and refuses easy answers, exploring how societies might be redesigned around cognitive flourishing rather than productivity, what "artificial democracy" could mean for collective decision-making, and why resilience — not efficiency — should be the metric we optimize for. After listening, you'll find yourself questioning whether knowledge itself still means what you thought it did.

AI-powered strategy intelligence for boards and executive teams — apply for Fraxios beta

Most organizations have a strategy. Almost none have a strategy process that stays current as conditions change. Fraxios is a new platform that structures your strategy as a living architecture across every dimension of your organization, so your leadership team can explore options, surface tensions, and evolve your thinking continuously rather than waiting for the next planning cycle. Now in private beta, working with a small number of organizations on real strategic challenges.

Thanks for reading!

Ross Dawson and team