- Humans + AI with Ross Dawson

- Posts

- Context in Cowork, what the best AI users do, human extension, and more

Context in Cowork, what the best AI users do, human extension, and more

This is the first of our evolved format newsletter. Still working on improving it, let us know any thoughts or feedback!

These are busy times in Humans + AI. I have four keynotes over the next two weeks spanning three countries, including in Indonesia and Nepal, where I will be addressing banking leaders on Humans + AI in banking and across the economy.

The Beta program for our Fraxios AI-augmented strategy platform will commence shortly. Check it out if you're interested in the potential for AI to enhance strategy and the strategy process.

Have a great week! Ross

💡Signal of the week

The last few days have perhaps been the most productive in my life, using AI products released a few days ago. Opus 4.7 is the new frontier model, which I’ve been working hard with over the last days on a major project. Claude Design is a game-changer, finally allowing good design of both online and offline content. The acceleration feels very real. But this power is only available to those who are using it. Keeping pace is critical.

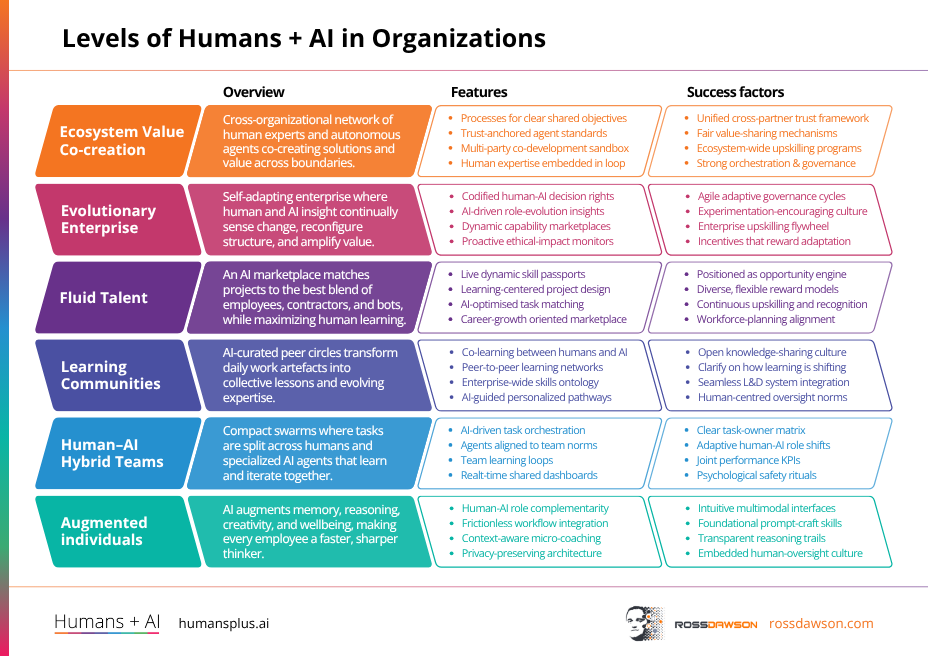

🖼️Framework: Levels of Humans + AI in Organizations

Most organizations are somewhere on a spectrum when it comes to how humans and AI actually work together — but few have a clear picture of where they stand or where they want to go.

The Levels of Humans + AI in Organizations framework helps you map your organization's current state and chart a realistic path forward. Use it when kicking off an AI strategy conversation with leadership to quickly align everyone on starting point and ambition.

📅Managing Context and Identity with Cowork and Github

Ross will demo his setup for Claude Cowork and Github, creating a system which can be used across machines and build self-improving memory and systems for managing ventures, projects, idea development, and more

⚡This week’s signals

An MIT FutureTech study evaluating 17,000+ outputs across 40 AI models found that systems handled 50% of text-based tasks at acceptable quality in 2024, rising to 65% in 2025, with projections of 80–95% by 2029. Rather than a wave of job elimination, the researchers describe AI as a rising tide that reshapes the task mix inside every role.

The pressing question is not which jobs disappear but which tasks inside every job get re-partitioned between human and machine — and who gets to decide how that boundary is drawn.

A Stanford SIEPR study of over 200,000 US households found that generative AI boosts efficiency on personal digital tasks by 76–176%, but the time freed up is flowing mostly into leisure rather than learning — and adoption is concentrated among younger, higher-income users. AI's productivity dividend is arriving first in private life, and it is landing very unevenly.

AI's most measurable productivity gains are appearing outside the workplace, and because the people capturing them are reinvesting that time in leisure rather than learning, the humans who will accumulate genuine long-term capability advantage from AI remain a much smaller group than the headline numbers suggest.

An eight-month study of 2,500 employees conducted with KPMG found that the most effective AI users treat the technology as a reasoning partner — making ambitious requests, delegating complex objectives, and using it as a general cognitive tool rather than a way to finish tasks faster. KPMG used these behavioral markers to move its workforce from high adoption to genuinely high-quality use.

Inside organizations, the gap that now matters most is not between those who use AI and those who don't, but between those using it as a task accelerator and those using it as a reasoning partner that meaningfully extends what they can think through and decide.

An analysis of industry-level data from 2017 to 2024 found that sectors with high AI exposure saw 10% productivity gains alongside 3.9% job growth and 4.8% wage growth per standard deviation of AI exposure — but these employment benefits were concentrated in sectors where AI complements human work rather than operating autonomously. Where AI ran more independently, productivity rose without a corresponding human dividend.

The real-world employment and wage data from sectors already deep into AI adoption make the design principle clear: complementarity between human and machine generates shared gains, while autonomous AI operation captures the productivity upside without passing it on to workers.

📊AI in Enterprise Report

AI Will Reshape More Jobs Than It Replaces

With AI adoption accelerating faster than workforce strategies, this BCG analysis gives organisations a concrete timeline for the transformation now underway.

• Within 2–3 years, 50–55% of US jobs will be substantially reshaped — most workers keep their roles but face radically different expectations.

• Job elimination is real but narrower than feared: 10–15% of positions could disappear within five years.

• Organisations capturing the most AI value have upskilled more than 75% of their workforce through enterprise-wide transformation, not isolated pilots.

The competitive divide is forming now — organisations that treat workforce upskilling as a core AI investment, not an afterthought, will be the ones that successfully build effective human-AI teams at scale.

🔬Latest Humans + AI Research

AI-Induced Human Responsibility (AIHR) in AI-Human Teams

As AI takes on more decision-making work, humans don't get let off the hook—they actually become more accountable in others' eyes.

• Working alongside AI increases the moral responsibility attributed to the human decision-maker, not decreases it—the human becomes the sole moral agent in the room.

• People perceive AI as a constrained tool rather than a genuine partner, so accountability concentrates upward onto whoever holds the human role.

• This 'responsibility transfer' effect is robust across contexts, meaning your team members cannot expect AI involvement to dilute blame if decisions go wrong.

Does this heightened attributed responsibility actually improve human judgment and oversight, or does it simply increase anxiety without changing behavior?

🌐From Humans + AI Community

Barry Barressi on Better Judgment for a More Intelligent Era "The real challenge facing social innovation leaders is judgment. More specifically, it is about strengthening leadership judgment under pressure within social systems that are shifting faster than many leaders can keep up with in real time."

🎧Humans + AI Podcast

Nina Begus on artificial humanities, AI archetypes, limiting and productive metaphors, and human extension (AC Ep38)

Listen nowWhy you should listen

What if the metaphors we use to describe AI are quietly shaping — and limiting — how we build and interact with it?

This conversation digs into the emerging field of "artificial humanities," exploring how archetypes, cultural narratives, and language itself frame our understanding of machine intelligence.

Nina Begus challenges listeners to interrogate the stories we tell about AI, distinguishing between metaphors that constrain our thinking and those that genuinely open new possibilities. After listening, you'll never reach casually for "the AI thinks" or "the AI feels" without pausing to ask what that framing is actually doing to you.

Join the Humans + AI Explorers community

The Humans + AI Explorers community brings together forward-thinking professionals exploring what it means to work, think, and lead alongside AI. Weekly events, curated discussions, and direct access to the people shaping this transition. Free membership available — premium tier for deeper access.

Thanks for reading!

Ross Dawson and team