- Humans + AI with Ross Dawson

- Posts

- AI for better understanding, building a Humans + AI brain, agent and human orchestration, and more

AI for better understanding, building a Humans + AI brain, agent and human orchestration, and more

This week, in between keynotes in Bali and Kathmandu, I have been developing a thesis on why "humans-in-the-loop" as commonly understood is usually (though not always) the wrong Humans + AI structure.

It's pretty fair to characterize what people mean by HITL as a human giving final approval to an AI decision or recommendation. That is flawed for a range of reasons, not least that it gradually reduces not only human involvement, but in fact the human judgment that needs to be applied. for this to work.

Designing workflows and structures that make the best use and indeed improve human judgment is critical.

Have a great week! Ross

💡Signal of the week

The term 'understanding' seems to be getting quite a bit of traction at the moment. There is a long-standing discussion over whether LLMs "understand", with most saying not.

But in a Humans + AI context, the issue is whether our LLM use increases our understanding. That's far more important than just knowledge. Everything in the Humans + AI frame is implicitly around this idea: we need to understand better as humans, assisted by AI, the better to decide, act, and create the futures we want.

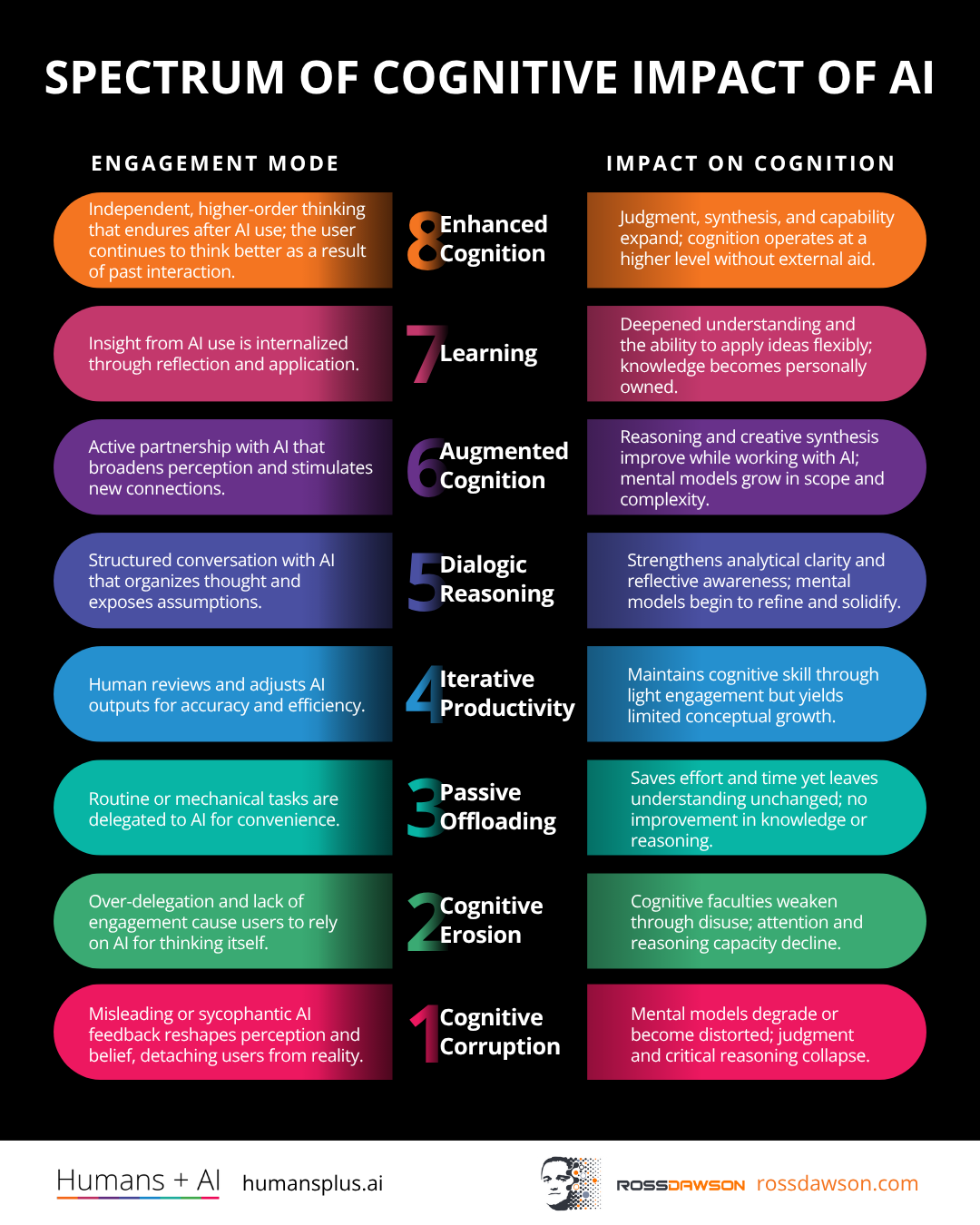

🖼️Framework: 8 Levels in the Spectrum of Cognitive Impact of AI

When you're trying to figure out whether your AI habits are actually helping you think better — or quietly making you worse at it — the 8 Levels in the Spectrum of Cognitive Impact of AI gives you a clear map of where you stand. Think of a colleague who copy-pastes AI answers without reading them versus one who uses AI to stress-test their own reasoning: both are "using AI," but the cognitive outcomes are worlds apart. If you want to audit how you or your team engages with AI, this is a great place to start — explore the full breakdown here.

📅Building an Evolving ‘Humans + AI’ Brain in Cowork

Following his previous session Ross will demo his rapidly-evolving "Humans + AI brain" setup for Claude Cowork and Github for Europe/Asia timezones. The system can be used across machines to build self-improving memory and systems for managing ventures, projects, idea development, and more.

⚡This week’s signals

Leaders' visibility into the short-term future has collapsed under AI's accelerating rate of change, undermining the criteria executives traditionally use to commit to forward-looking investments. HBR frames this fog as a structural feature of the AI era — not a transitional discomfort to be waited out.

Signals that planning horizons are now shorter than the technology cycles they're meant to plan for — making strategic patience and reversibility the new core executive competencies.

MIT Technology Review names agent orchestration — the layer that coordinates multiple AI agents and routes work between humans and machines — as one of the year's pivotal technologies, framing it as the bottleneck that determines whether agentic systems deliver value or chaos. The piece argues the next wave of enterprise AI value will come from orchestration design choices, not raw model capability.

Signals that the leverage point in agentic AI has shifted from the agent to the architecture connecting them — making division-of-labor design a board-level capability, not an engineering detail.

Drawing on a wave of MIT, Harvard, Microsoft and Wharton research, this piece argues that generative AI delivers 14-40% short-term performance gains while eroding the critical thinking, memory and independent judgement it claims to augment. The fix is metacognitive — learning when to offload to AI and when to do the hard thinking yourself.

Signals that the question is shifting from "can AI do this?" to "should we let it?" — and the answer hinges on preserving the cognitive muscle that makes humans worth augmenting at all.

OpenAI — the company most responsible for the narrative that AI replaces human creativity — is hiring human writers, designers and editors at $200K+ salaries even as it shuts down its Sora consumer video app. A pointed contradiction about where genuine creative value still resides.

Signals that even the firms selling "AI replaces humans" need humans to make their AI usable — a tell about which kinds of creative work are still scarce, expensive, and worth paying for.

📊AI in Enterprise Report

The AI Transformation Manifesto

With fewer than 10% of enterprises having scaled AI agents to measurable value, McKinsey's manifesto arrives as a direct challenge to how most organisations are approaching transformation.

• Workflow redesign must precede tool rollout — deploying AI on top of existing processes reliably fails to deliver scale.

• Agent-first operating models, not AI-augmented legacy structures, define how leading organisations are redesigning work.

• Leadership must actively reallocate work, not simply add AI capability alongside unchanged roles and responsibilities.

Organisations that treat human-AI teaming as a staffing adjustment rather than a fundamental workflow redesign will remain stuck in pilot purgatory — the architecture of work itself must change first.

🔬Latest Humans + AI Research

Imperfectly Cooperative Human-AI Interactions: Comparing the Impacts of Human and AI Attributes in Simulated and User Studies

If you're using AI simulations to evaluate AI agents before real-world deployment, this research shows you're likely misjudging what actually drives performance with human partners.

• AI simulations systematically undervalue chain-of-thought transparency — a factor that significantly shapes outcomes when real humans are in the loop.

• Human attributes like trust disposition and negotiation style influence collaborative outcomes in ways that LLM-only testing consistently fails to capture.

• In adversarial or partially-aligned settings — hiring, transactions, negotiations — simulation-validated AI agents may behave very differently once deployed with actual people.

How much simulation-to-human divergence is acceptable before organizations must require mandatory human-participant validation before deploying AI agents in high-stakes interactions?

🌐From Humans + AI Community

Winning by choosing augmentation over automation:

Organizations face a critical choice between using AI to reduce headcount through automation or expanding human potential through augmentation.

While automation offers immediate cost savings, a strategy centered on augmentation fosters long-term growth by leveraging human-AI coordination and maintaining organizational trust.

🎧Humans + AI Podcast

Jon Husband on wirearchy, web weaving, the relational economy, and drift diving (AC Ep41)

Listen nowWhy you should listen

What happens to organizations when information flows freely and authority no longer depends on hierarchy? Jon Husband coined the term "wirearchy" decades ago to describe exactly this shift — a world where power emerges from knowledge, trust, and connection rather than titles and org charts.

This conversation digs into how that idea has aged, why most institutions are still fighting the current, and what "web weaving" and the relational economy actually demand of us as humans navigating constant change. After listening, you'll find yourself questioning every top-down structure you've quietly accepted as inevitable.

Bring the humans + AI conversation to your leadership event

Ross Dawson has delivered keynotes and strategic sessions on the future of organizations, work, and value creation to leadership teams and conferences in over 35 countries. Topics span human-AI collaboration, AI-augmented strategy, future of work, and effective AI leadership. If your team is navigating these questions, please get in touch.

Thanks for reading!

Ross Dawson and team